Overview

Artificial Intelligence has crossed a critical inflection point. Organizations are no longer asking whether to adopt AI — they are asking how to deploy it responsibly, scalably, and with measurable business value. At ITCrats, we bridge the gap between AI potential and enterprise reality.

Our Generative AI and Agentic AI practice is purpose-built for enterprises that demand more than prototypes. We architect, build, and operationalize AI systems that reason, plan, and act autonomously — integrating seamlessly with your existing data infrastructure, cloud platforms, and compliance frameworks. Whether you are deploying Large Language Models (LLMs) to accelerate knowledge work, building multi-agent systems to automate complex workflows, or embedding AI governance into every layer of your stack, ITCrats brings the engineering depth and domain expertise to make it production-ready.

Our practice eliminates the three most common failure modes of enterprise AI initiatives:

• AI that never leaves the sandbox — impressive demos that fail in production at scale.

• AI without governance — uncontrolled outputs that introduce legal, regulatory, and reputational risk.

• AI without memory or context — disconnected systems that fail to compound value across interactions.

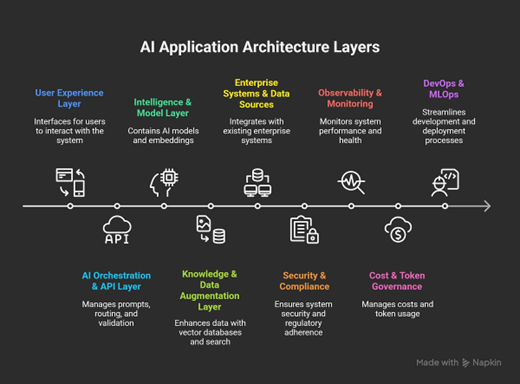

At the core of every ITCrats GenAI engagement is a production-hardened platform architecture — connecting your enterprise data to foundation models through secure, observable, and governed pipelines. The diagram below illustrates the nine interdependent layers that make up a complete enterprise AI application stack, from the user experience layer through to DevOps and MLOps automation.

ITCrats delivers a comprehensive, end-to-end AI capability portfolio spanning strategy, engineering, governance, and managed operations:

AI Strategy & Roadmap Advisory

We work with business and technology leadership to define a clear, prioritized AI roadmap that aligns with your organizational goals, data maturity, and competitive landscape. Our advisory engagements include AI use-case discovery workshops, build-vs-buy analysis, LLM platform selection, and governance framework design. We translate AI ambition into a phased execution plan with defined value milestones — ensuring leadership alignment from day one.

Large Language Model (LLM) Platform Engineering

We design and deploy enterprise-grade LLM platforms built for performance, security, and scale. Our engineering teams implement Retrieval-Augmented Generation (RAG) architectures, fine-tuning pipelines, prompt engineering frameworks, and model evaluation systems across leading platforms including Azure OpenAI, AWS Bedrock, and Google Vertex AI. Every deployment is instrumented for latency, token cost, hallucination detection, and model drift — giving your teams full observability into AI behavior in production.

Agentic AI Systems Design & Deployment

We architect multi-agent systems that move beyond single-model inference to build AI that plans, reasons, delegates tasks, and executes across tools and systems autonomously. Our agentic AI implementations leverage frameworks including LangGraph, AutoGen, CrewAI, and custom agent orchestrators to deliver intelligent automation for complex, multi-step workflows. We design agent memory systems, tool-use protocols, and human-in-the-loop checkpoints that make autonomous AI trustworthy and auditable — not just fast.

Generative AI Application Development

We build production-ready GenAI applications tailored to your business domain — from intelligent document processing and conversational enterprise search to AI-powered customer engagement platforms and automated reporting systems. Our development practice follows MLOps and LLMOps principles, ensuring every application ships with CI/CD pipelines, version-controlled prompts, automated model evaluation gates, and rollback capabilities.

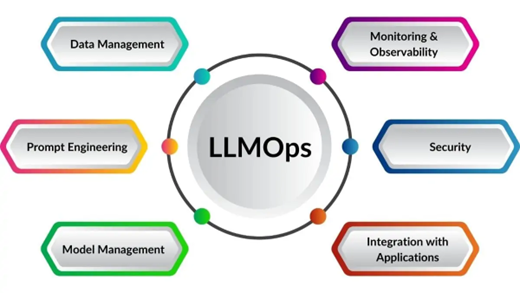

LLMOps — Operationalizing AI at Enterprise Scale

Deploying an LLM is straightforward. Keeping it accurate, cost-efficient, and reliable in production is the real engineering challenge. ITCrats implements complete LLMOps pipelines managing the full lifecycle — automated retraining triggers, prompt versioning, A/B evaluation frameworks, cost monitoring, and multi-environment promotion workflows (Dev → UAT → Production).

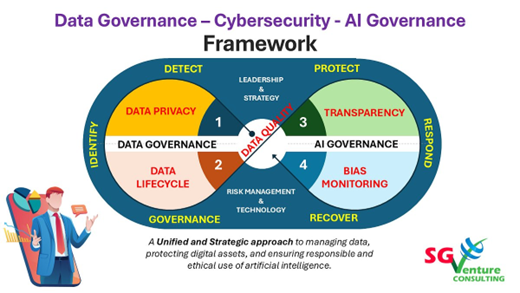

AI Governance, Risk & Compliance

As regulatory scrutiny of AI systems intensifies globally, governance is no longer optional — it is a business requirement. ITCrats implements comprehensive AI governance frameworks covering model transparency, bias detection, explainability, data privacy, and audit trail management, suited to regulated industries including healthcare, financial services, and insurance.

Our solution portfolio spans the full spectrum of GenAI and Agentic AI applications:

Custom AI assistants and copilots embedded in enterprise workflows — connected to your internal knowledge bases, systems of record, and APIs.

End-to-end automation of document intake, classification, extraction, and routing using LLMs — reducing manual processing time and error rates.

RAG-powered knowledge platforms that surface accurate, source-cited answers from across your enterprise data — eliminating information silos.

Multi-agent orchestration systems that autonomously execute multi-step business processes — from provider enrollment to supply chain exception handling.

Natural language interfaces to your analytics stack — enabling business users to query data and receive AI-narrated insights without SQL or BI expertise.

Enterprise-grade virtual agents with persistent memory, multi-turn reasoning, and seamless escalation paths — built on Azure Copilot Studio and Amazon Lex.

Agentic AI systems represent the next frontier beyond single-model inference. Rather than a model simply responding to a query, agentic systems decompose complex goals into sub-tasks, delegate those tasks to specialized agents, manage state across interactions, and iteratively refine their outputs until a goal is achieved. The diagram below illustrates the four core agent types that ITCrats deploys within enterprise multi-agent architectures.

Core architectural patterns we implement include:

- Supervisor-worker agent topologies for hierarchical task delegation with clear escalation paths.

- Peer-to-peer agent collaboration frameworks where specialized agents negotiate and coordinate autonomously.

- Stateful agent memory systems using vector databases and structured state stores for persistent context across sessions.

- Tool-use protocols enabling agents to call APIs, execute code, query databases, and interact with enterprise systems.

- Human-in-the-loop (HITL) checkpoints with configurable approval gates for risk-sensitive decision steps.

Agent Memory & Long-Horizon Reasoning

ITCrats implements multi-tier agent memory architectures that give your AI systems the ability to remember, learn, and continuously improve. We design episodic memory (recall of specific past interactions), semantic memory (accumulated knowledge from enterprise data), and procedural memory (learned workflows and decision heuristics) — all integrated into a cohesive memory management layer built on vector databases including Azure AI Search, Pinecone, and Weaviate.

Autonomous Workflow Automation

Agentic AI unlocks a new category of process automation that goes far beyond traditional RPA and rule-based systems. ITCrats designs autonomous workflow systems that handle ambiguity, make judgment calls, and adapt to changing conditions — capabilities that traditional automation tools simply cannot achieve. Applications include automated healthcare provider enrollment, multi-system reconciliation, regulatory filing assistance, and intelligent procurement workflows, each instrumented with full audit trails and configurable human oversight.

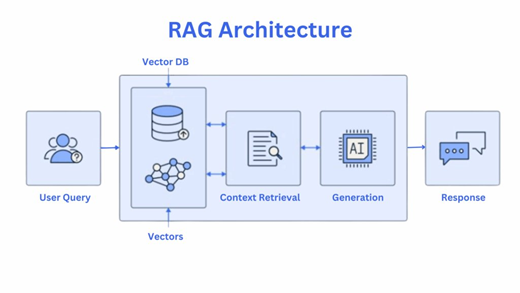

RAG is the cornerstone of enterprise-grade GenAI applications. Rather than relying solely on the pre-trained knowledge of a foundation model — which becomes outdated and cannot access proprietary data — RAG architectures dynamically retrieve the most relevant content from your curated knowledge bases at inference time, grounding the model's responses in your source-of-truth data.

ITCrats implements advanced RAG pipelines incorporating:

- Hybrid search (dense vector embeddings + sparse keyword retrieval) for maximum recall accuracy.

- Metadata filtering and document-level access control for secure, role-appropriate responses.

- Chunking strategy optimization to preserve semantic context across document types.

- Re-ranking layers using cross-encoder models to prioritize the most relevant retrieved passages.

- Evaluation frameworks using RAGAS and custom metrics to continuously measure answer faithfulness, relevance, and groundedness.

Model Fine-Tuning & Domain Adaptation

When general-purpose models are insufficient for specialized domain tasks, ITCrats engineers fine-tuned models adapted to your specific vocabulary, reasoning patterns, and output requirements. We implement parameter-efficient fine-tuning techniques including LoRA and QLoRA — enabling cost-effective adaptation of large-scale models without full retraining. Fine-tuned models are versioned, evaluated against domain-specific benchmarks, and deployed through managed model registries for controlled rollout.

Prompt Engineering & Evaluation Frameworks

Reliable AI outputs require systematic prompt engineering — not ad hoc experimentation. ITCrats implements structured prompt development workflows including chain-of-thought prompting, few-shot learning, self-consistency techniques, and prompt templating systems. All prompts are version-controlled, tested against curated evaluation datasets, and monitored in production for output quality drift — ensuring your AI applications remain accurate as underlying models evolve.

Deploying an LLM is straightforward. Keeping it accurate, cost-efficient, and reliable in production is the real engineering challenge. ITCrats implements complete LLMOps pipelines that manage the full lifecycle of language models in production — closing the loop from model selection through continuous monitoring back to automated retraining triggers. The six pillars below represent the full scope of ITCrats LLMOps practice.

ITCrats LLMOps engagements deliver measurable production outcomes:

- Automated model promotion pipelines with quality gates enforcing minimum accuracy thresholds before production release.

- Prompt versioning and A/B evaluation frameworks that compare model outputs across prompt variants at scale.

- Cost governance dashboards providing real-time visibility into token consumption, latency, and per-request cost attribution

- Drift detection alerts that trigger automatic retraining workflows when model performance degrades beyond configurable thresholds.

- Multi-environment deployment orchestration spanning Dev, UAT, and Production with full rollback capability.

As regulatory scrutiny of AI systems intensifies globally, governance is no longer optional — it is a business requirement. ITCrats implements comprehensive AI governance frameworks covering model transparency, bias detection, explainability, data privacy, and audit trail management. Our governance solutions are particularly suited to regulated industries including healthcare, financial services, and insurance — where AI decisions must be defensible, traceable, and compliant with applicable standards.

ITCrats governance implementations cover:

- Model transparency and explainability using SHAP, LIME, and model cards aligned to NIST AI RMF.

- Bias detection and fairness auditing using IBM AIF360 and Microsoft Fairlearn with documented remediation workflows.

- Data privacy controls including PII masking, encryption at rest and in transit, and role-based access control on training data and inference logs.

- Regulatory compliance mapping to HIPAA, SOC 2 Type II, EU AI Act, ISO/IEC 42001, and OWASP LLM Top 10.

- Audit trail generation providing end-to-end traceability from input data through model decision to business outcome.

ITCrats' Generative AI and Agentic AI capabilities are deployed across industries with sector-specific depth:

Automate provider enrollment and credentialing workflows, accelerate clinical documentation with AI-assisted coding and summarization, implement HIPAA-compliant AI governance frameworks, and enable intelligent prior authorization processing.

Deploy AI-powered fraud narrative generation, automate regulatory reporting, build intelligent loan underwriting assistants, and implement real-time transaction anomaly explanation systems converting model predictions into human-readable risk narratives.

Build AI-powered product description generation at scale, deploy personalized recommendation agents, automate supplier communication workflows, and implement conversational shopping assistants with persistent customer memory.

Implement AI for equipment maintenance documentation summarization, regulatory compliance report generation, field technician AI assistants with offline capability, and predictive maintenance narrative systems.

Deploy secure GenAI environments for sensitive data analysis, build citizen services conversational AI, automate policy document analysis and summarization, and implement AI for grant application processing.

Automate quality control report generation, deploy AI for supplier contract analysis and risk flagging, build intelligent production scheduling assistants, and implement AI-powered root cause analysis for production failures.

ITCrats engineers are fluent across the leading AI/ML platforms, cloud providers, and open-source frameworks — giving you the flexibility to build on the stack that best fits your enterprise standards and existing investments.

Azure OpenAI (GPT-4o, o1), Anthropic Claude, AWS Bedrock (Titan, Llama), Google Gemini, Mistral, Meta Llama 3

LangChain, LangGraph, Microsoft AutoGen, CrewAI, Semantic Kernel, Amazon Bedrock Agents

Azure AI Search, Pinecone, Weaviate, ChromaDB, FAISS, pgvector

Azure Machine Learning, Databricks MLflow, Weights & Biases, Evidently AI, Arize Phoenix

Microsoft Azure, Amazon Web Services (AWS), Google Cloud Platform (GCP)

Azure AI Content Safety, IBM AIF360, Microsoft Fairlearn, Prometheus, Grafana, custom audit pipelines

Python, FastAPI, Docker, Kubernetes, GitHub Actions, Azure DevOps, Terraform

The AI services market is crowded with vendors who sell vision and deliver prototypes. ITCrats is different. We are a delivery-focused engineering organization that measures success by AI systems in production — not by compelling slide decks.

Engineering-first delivery — Every engagement is led by senior AI and data engineers with hands-on production deployment experience. We do not outsource to generalists.

Enterprise integration expertise — Every system we build is designed to integrate with your existing cloud infrastructure, data platforms, security frameworks, and compliance requirements from day one.

Governance by design — AI governance is embedded in our delivery methodology — not retrofitted at the end. Auditability, explainability, and bias controls are first-class engineering requirements.

Domain depth in regulated industries — Our teams bring deep experience in healthcare, financial services, and insurance — where AI failures carry serious legal, financial, and patient safety consequences.

Agile delivery with measurable milestones — We deliver value in iterations. Each sprint produces a working, tested increment. Business stakeholders see running AI systems early and often.

Whether you are launching your first enterprise AI initiative or scaling an existing one to production, ITCrats has the engineering capability, domain expertise, and governance discipline to accelerate your journey.

Contact us today to schedule an AI Discovery Workshop — a structured engagement where our architects and engineers assess your current state, identify your highest-value AI opportunities, and design a pragmatic roadmap to production-ready AI systems.